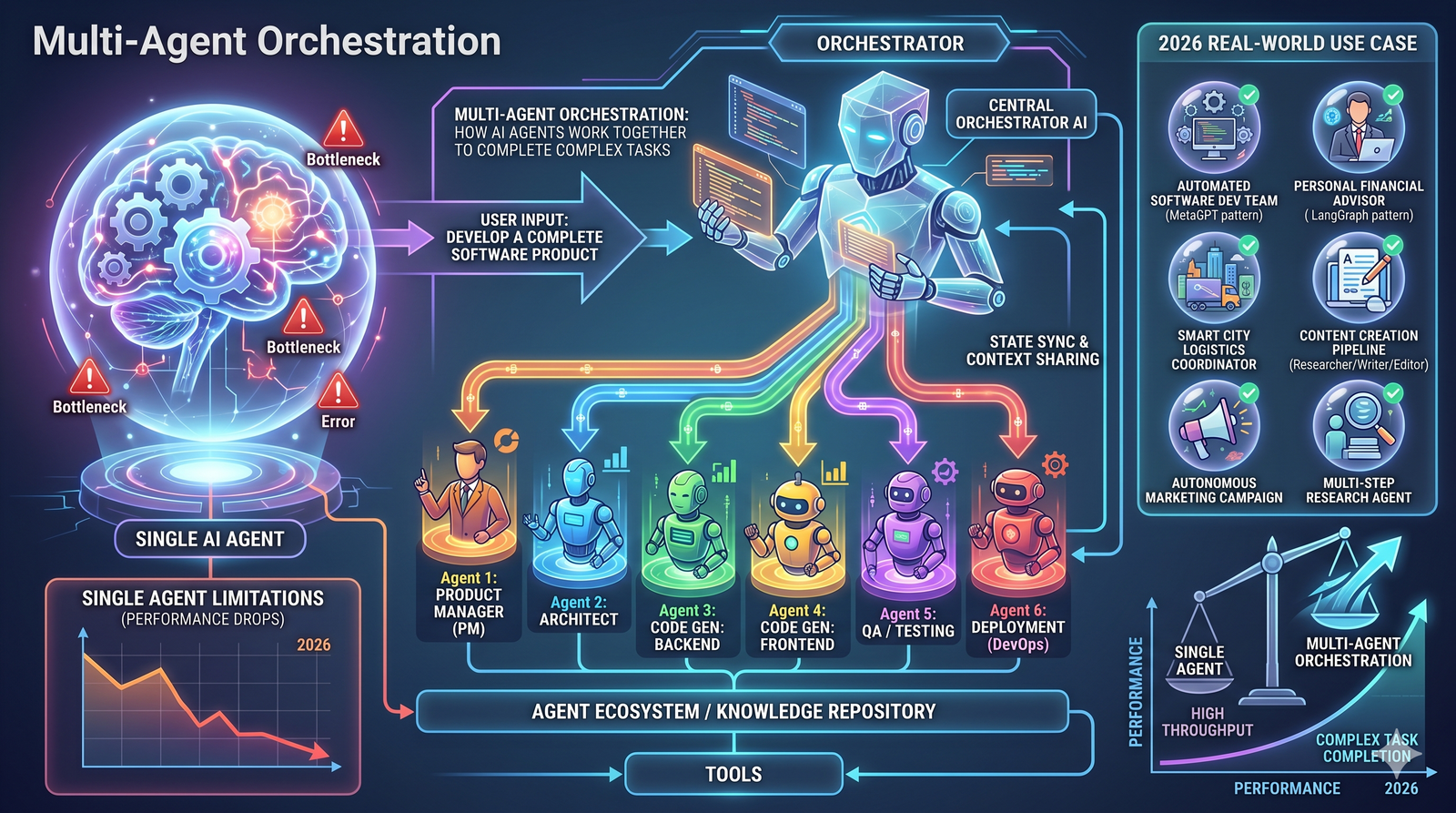

Multi-Agent Orchestration: How AI Agents Work Together to Complete Complex Tasks

Multi-agent orchestration splits complex work across specialized AI agents working in parallel. Learn how it works, why it outperforms single agents, and where it's being used in 2026.

Ask one person to write a report, analyze a dataset, design a presentation, and check everything for errors — all at the same time. They'll do a bad job. Not because they're incapable, but because doing four different things at once is a terrible way to work.

AI has the same problem.

When you ask a single AI agent to handle a complex task with many parts, quality drops as the work piles up. A 2026 study from Mount Sinai Hospital tested this directly. At 80 simultaneous tasks, a single AI agent's accuracy fell to just 16%. A coordinated team of specialized agents handling the same workload stayed accurate — while using 65 times less computing power.

That's the core idea behind multi-agent orchestration. Instead of one AI doing everything, you split the work across multiple specialized agents that run in parallel, each handling what it's best at. A coordinator brings the results together into one finished output.

This article explains how multi-agent orchestration works, when it actually helps, when it doesn't, and why it's becoming the standard architecture for serious AI work in 2026.

The Problem With Single Agents

To understand why multi-agent systems exist, you need to understand where single agents break down.

Every AI model has a context window — a limit on how much information it can hold in memory at once. Think of it like a desk. A small desk works fine when you're reading one document. But pile 50 documents on it, and things start falling off the edges.

When a single agent processes a long, complex task, three things happen.

Quality degrades. The agent gives its best work to the first few items. As the task grows longer, responses get shorter, less detailed, and less accurate. By the end, the model may fabricate information to fill gaps. Researchers call this "context rot" — performance drops as the context window fills up.

Errors compound. When one agent handles every step in sequence, a mistake in step three affects everything that follows. Research shows that without coordination, errors in multi-step tasks compound by a factor of 17.2 times. With a central orchestrator managing the work, that drops to 4.4 times.

Speed bottlenecks. A single agent processes steps one after another. If step five takes a long time, everything after it waits. There's no way to work on independent tasks simultaneously.

These aren't edge cases. They're fundamental limits of how large language models work. And they become obvious the moment you try to use AI for real business tasks that involve more than a simple question and answer.

What Is Multi-Agent Orchestration?

Multi-agent orchestration is an architecture where multiple specialized AI agents work together under the direction of a coordinator to complete a complex task.

Instead of one generalist agent trying to do everything, the work gets split across specialists. Each agent has its own context window, its own focus, and its own tools. They run in parallel when possible and pass their results back to the coordinator, which assembles the final output.

The concept mirrors how effective human teams work. A project manager doesn't write the code, design the slides, and analyze the data. They break the project into pieces, assign each piece to the right person, track progress, and assemble the final deliverable. Multi-agent orchestration works the same way — except the "team members" are AI agents.

Here's a simple example. Say you ask an AI system to research 50 companies and produce a competitive analysis. In a single-agent setup, one model processes all 50 companies sequentially. By company 30, quality has noticeably declined.

In a multi-agent setup, a coordinator agent receives your request and launches 50 independent research agents — one per company. Each agent gets its own fresh context window and full attention for its one company. A synthesis agent then takes all 50 results and compiles the final report. Every company gets the same depth of analysis, and the whole process runs dramatically faster.

The Four Patterns of Multi-Agent Architecture

Not all multi-agent systems work the same way. There are four main patterns, and each one fits different types of work.

Sequential Pipeline

Agents work one after another, each handling a specific stage. Agent A does research. Agent B takes that research and writes a draft. Agent C edits the draft. Agent D formats the final output.

This pattern works best when each step depends on the one before it. Think of it like an assembly line. The output of one station becomes the input for the next.

Best for: Document creation, content pipelines, any task where steps have a natural order.

Parallel Fan-Out

The coordinator sends the same type of task to many agents at once. Each agent works independently on its own portion. Results are collected and merged at the end.

This is the pattern used for analyzing large batches — 100 companies, 500 survey responses, 200 research papers. Each agent gets one item and processes it with full attention.

Best for: Large-scale data processing, batch analysis, research across many sources.

Specialist Delegation

The coordinator analyzes the task and routes different parts to agents with different specializations. A coding agent handles the technical work. A writing agent handles the report. A data agent handles the analysis.

This pattern is about matching the right skill to the right subtask, not just splitting volume.

Best for: Complex projects that require multiple types of expertise — like building a product demo that involves code, design, and documentation.

Collaborative Discussion

Agents communicate with each other in a shared context, proposing ideas, challenging assumptions, and building on each other's outputs. A coordinator moderates the conversation and synthesizes the final result.

This pattern is the most experimental. It works for problems where the solution path isn't clear upfront and needs to be discovered through exploration.

Best for: Brainstorming, strategy development, problems where multiple perspectives improve the outcome.

The Research: Does It Actually Work?

Multi-agent orchestration sounds good in theory. But the evidence matters more than the architecture diagrams. Here's what the research actually shows.

Anthropic's Multi-Agent Research System

Anthropic built a multi-agent system for Claude's Research feature. A lead agent analyzes the user's question, creates a plan, and launches multiple sub-agents to search for information in parallel. Each sub-agent has its own context window and explores a different aspect of the question independently.

Their internal evaluations found that a multi-agent system with Claude Opus 4 as the lead agent and Claude Sonnet 4 as sub-agents outperformed a single Claude Opus 4 agent by 90.2% on research evaluation tasks. The gains were largest on broad queries that required exploring many independent directions at once — like identifying board members across all S&P 500 technology companies.

Their analysis also revealed that token usage explains 80% of performance variance. Multi-agent systems perform better primarily because they can apply more total reasoning capacity to a problem by distributing work across separate context windows. Each agent thinks deeply about its piece without being crowded out by everything else.

Mount Sinai Hospital Clinical AI Study

In March 2026, researchers at Mount Sinai published a study comparing single-agent and multi-agent AI systems on clinical tasks. They tested information retrieval, data extraction, and medication dose calculations under simulated real-world conditions with up to 80 simultaneous tasks.

The results were stark. The single-agent system's accuracy dropped from 73.1% at five tasks to 16.6% at 80 tasks. The multi-agent system held at 90.6% at five tasks and 65.3% at 80 tasks. And the multi-agent system used 65 times fewer computing resources.

The researchers specifically noted that GPT-4.1-mini's multi-agent accuracy stayed between 96% and 91.4% across all batch sizes, while the single-agent version declined from 96% to 33.9%. The architecture mattered more than the model.

Stanford's Token Budget Research

Not all the evidence favors multi-agent systems unconditionally. In April 2026, Stanford University published research showing that single-agent systems can match or outperform multi-agent architectures on complex reasoning tasks — when both are given the same "thinking token" budget.

The key insight: multi-agent systems often win simply because they use more total tokens (more computing resources), not because the architecture itself is inherently superior. For tasks that require strict sequential reasoning — where each step depends tightly on the previous one — the communication overhead between agents can actually hurt performance by 39 to 70%.

This is an important nuance. Multi-agent systems aren't universally better. They're better for specific types of work — particularly tasks that can be parallelized, tasks with large scope, and tasks that benefit from specialist attention.

Databricks Production Data

Databricks reported a 327% increase in multi-agent workflow usage on their platform in just four months (June to October 2025), based on telemetry from over 20,000 organizations including more than 60% of the Fortune 500. This isn't research lab experimentation. It's production adoption at scale.

When Multi-Agent Systems Help (And When They Don't)

Based on the research, here's a practical guide.

Multi-agent orchestration helps most when:

The task has many independent parts. Analyzing 100 companies, reviewing 200 documents, processing 500 data points. Each item can be handled independently by its own agent without depending on the others.

The single-agent success rate is low. Google's research suggests multi-agent systems are most effective when single-agent success rates fall below 45%. If a single agent already does the job well, adding more agents adds cost without meaningful improvement.

The task requires multiple types of expertise. A project that needs research, code, analysis, and writing benefits from specialist agents for each capability rather than one generalist trying to do all four.

Speed matters. Parallel execution can reduce research times by up to 90% compared to sequential processing, according to Anthropic's engineering team. Tasks that would take hours with a single agent can finish in minutes.

Multi-agent orchestration doesn't help when:

The task is tightly sequential. If every step depends entirely on the one before it, there's nothing to parallelize. Adding agents just adds communication overhead.

The task is simple. Single agents handle straightforward, well-defined tasks efficiently. Using a multi-agent system for a simple question is like hiring a project team to change a lightbulb.

Budget is extremely limited. Multi-agent systems use approximately 15 times more tokens than single-agent interactions. For cost-sensitive applications on routine tasks, a single well-prompted agent may be the smarter choice.

How the Orchestrator Works

The orchestrator is the most important piece of a multi-agent system. It's the coordinator that makes everything run smoothly. Here's what it actually does.

Task decomposition. The orchestrator receives your goal and breaks it into subtasks. This requires understanding what needs to happen, in what order, and which parts can run in parallel. A good orchestrator creates the right granularity — not too broad (losing the benefit of specialization) and not too fine (drowning in coordination overhead).

Agent assignment. The orchestrator decides which agent handles each subtask. In some systems, this is static — a research agent always does research. In more advanced systems, the orchestrator dynamically selects agents based on the specific requirements of each subtask.

Parallel execution management. The orchestrator launches agents simultaneously when their tasks don't depend on each other. It manages the timing, handles agents that finish early, and deals with agents that fail.

Error handling. When an agent fails or produces poor results, the orchestrator needs a recovery plan. It might retry the task, assign it to a different agent, or work around the missing result. Without this, a single failed agent can cascade into a failed project.

Result synthesis. Once all agents complete their work, the orchestrator assembles the results into a coherent final output. This is more than just concatenation — it requires resolving conflicts, removing redundancy, maintaining consistency, and structuring the deliverable in a useful format.

The Role of Model Routing in Multi-Agent Systems

One of the more important developments in multi-agent orchestration is intelligent model routing. Different AI models have different strengths. Some are better at writing. Some excel at analysis. Some are optimized for code. Some are fast and cheap. Some are slow and powerful.

In a well-designed multi-agent system, the orchestrator doesn't just assign tasks to agents — it also selects the right AI model for each agent's job. A research sub-agent might use a model optimized for information retrieval. A writing agent might use a model known for coherent long-form output. A code agent might use a model fine-tuned for programming.

This model routing happens automatically. The user doesn't need to know or care which model handles which subtask. They describe what they want, and the system handles the rest.

This approach has a real economic benefit too. Expensive frontier models get reserved for the hardest reasoning steps. Cheaper, faster models handle routine subtasks. The result is better overall quality at a lower total cost than running everything through a single expensive model.

The Governance Challenge

Multi-agent systems introduce governance complexities that single-agent systems don't have. When multiple agents run in parallel, accessing tools, browsing the web, and processing data simultaneously, visibility becomes harder.

Only 21% of companies currently have a mature AI agent governance model, according to Deloitte. With multi-agent systems, the challenge multiplies.

Audit trails. When a single agent produces an output, you can trace its reasoning in one conversation thread. When five agents contribute to a result, you need to trace all five threads and understand how the orchestrator combined them. Without proper logging, you lose the ability to understand why the system produced a particular output.

Access controls. Each agent in a multi-agent system may need access to different tools and data sources. A research agent might need web access. A data agent might need database access. Managing permissions across multiple agents requires more careful design than managing one.

Error attribution. When a multi-agent system produces incorrect output, which agent was responsible? Was it the research agent that found bad data? The analysis agent that misinterpreted it? Or the orchestrator that combined the results incorrectly? Pinpointing the source of errors in distributed systems is significantly harder.

Cost visibility. Multi-agent systems use more tokens and more API calls. Without clear cost tracking per agent and per task, spending can grow unpredictably. Organizations need granular visibility into what each agent consumes.

The organizations getting the most value from multi-agent systems in 2026 are the ones that built governance into the architecture from day one — not the ones trying to bolt it on after deployment.

What's Coming Next

Multi-agent orchestration is evolving fast. Here are the most significant changes taking shape.

Asynchronous execution. Today, most orchestrators wait for all sub-agents to finish before assembling the output. The next step is asynchronous systems where agents can spawn new sub-agents, adjust plans mid-execution, and deliver partial results while other agents are still working. Anthropic has identified this as a key area of development for their research system.

Self-improving orchestration. Some systems are starting to learn which agents perform best on which types of tasks, adjusting routing decisions based on past performance. Over time, the orchestrator gets better at assigning work — similar to how a project manager learns which team members are strongest at what.

Cross-platform coordination. Today's multi-agent systems typically run within a single platform. The emerging trend is agents that coordinate across different platforms and tools — one agent working inside your CRM, another in your analytics platform, another browsing the web — all orchestrated toward a single goal.

Smaller, more efficient specialist models. As open-source models improve, multi-agent systems can use lightweight specialized models for routine subtasks instead of relying on expensive frontier models for everything. This is already happening — multi-agent systems that intelligently mix large and small models deliver better results at lower cost than systems that use the same expensive model for every agent.

The Bottom Line

Multi-agent orchestration isn't a buzzword. It's a practical architecture for solving a real problem: single AI agents break down on complex, large-scale tasks.

The evidence is clear. Multi-agent systems maintained 65.3% accuracy where single agents dropped to 16.6% under heavy load. They outperformed single agents by 90.2% on broad research tasks. Production adoption grew 327% in just four months across Fortune 500 companies.

But multi-agent systems aren't always the right choice. They add cost, complexity, and governance challenges. They work best for tasks that can be parallelized or that require multiple types of expertise. For simple, sequential tasks, a well-designed single agent is still the better option.

The practical question for any team evaluating AI agents is straightforward. Look at the work you need done. If it's simple and well-defined, a single agent will handle it. If it involves processing large volumes, combining multiple skills, or delivering complex deliverables, multi-agent orchestration is likely the architecture that will actually get you reliable results.

The technology is no longer experimental. The question is how to implement it well.