AI Agents for Market Research: From 4 Hours to 15 Minutes

Manual competitive research takes hours. AI agents with parallel processing deliver the same depth in minutes. Here is the step-by-step workflow with real examples and practical tips.

A competitive analysis of 20 companies takes a skilled analyst four to six hours. Open 20 browser tabs. Visit each company's website. Read their product pages, pricing pages, and about pages. Search for recent news. Check review sites. Cross-reference features. Build a comparison spreadsheet. Write the summary.

That same analysis takes 15 to 25 minutes with AI agents.

Not because the AI is smarter than the analyst. But because the AI can research 20 companies simultaneously while the analyst has to do them one at a time. And because the AI does not lose focus or cut corners on company number 18 the way a human inevitably does after four hours of repetitive research.

This article walks through the exact workflow for using AI agents to do market research, with real examples, practical tips, and honest limitations.

Why Traditional AI Chat Falls Short for Research

Before covering what works, it is worth understanding what does not work.

If you open ChatGPT and type "analyze 20 competitors in the project management space," you will get a response. It will list some companies with brief descriptions. But the output will have several problems.

Stale data. The model's training data has a cutoff. It does not know about product launches, pricing changes, or company developments from the last few months.

Declining quality. As the response gets longer, the analysis gets thinner. Company 1 might get a detailed breakdown. Company 15 gets a single sentence. By company 20, the model is padding with generic statements or fabricating details.

No sources. The output reads as authoritative but does not cite where the information came from. You cannot verify claims without doing your own research anyway.

Surface-level analysis. A single prompt cannot produce the depth of analysis that comes from reading each company's actual website, checking their pricing page, reading customer reviews, and synthesizing all of that information.

These limitations are not about the AI model being bad. They are about the architecture. A single model processing 20 companies in one long response hits context window limitations, attention degradation, and knowledge cutoff constraints. The solution is not a better prompt. It is a different architecture.

How Agent-Based Research Works

The agent-based approach solves each of these problems by changing how the research gets executed.

Step 1: Define the Research Brief

Start by describing what you need clearly. A good research brief for an AI agent includes the list of companies (or criteria for finding them), the specific data points you want for each company (product features, pricing, target market, recent funding, employee count, key differentiators), the format you want the output in (comparison table, written report, spreadsheet), and any specific questions you want answered (who has the strongest enterprise offering, which companies raised funding in the last year, what pricing models are used).

The more specific your brief, the better the output. "Research the project management space" produces a generic overview. "Compare these 20 project management tools on pricing, enterprise features, API availability, and customer review scores from G2" produces a useful deliverable.

Step 2: Parallel Agent Deployment

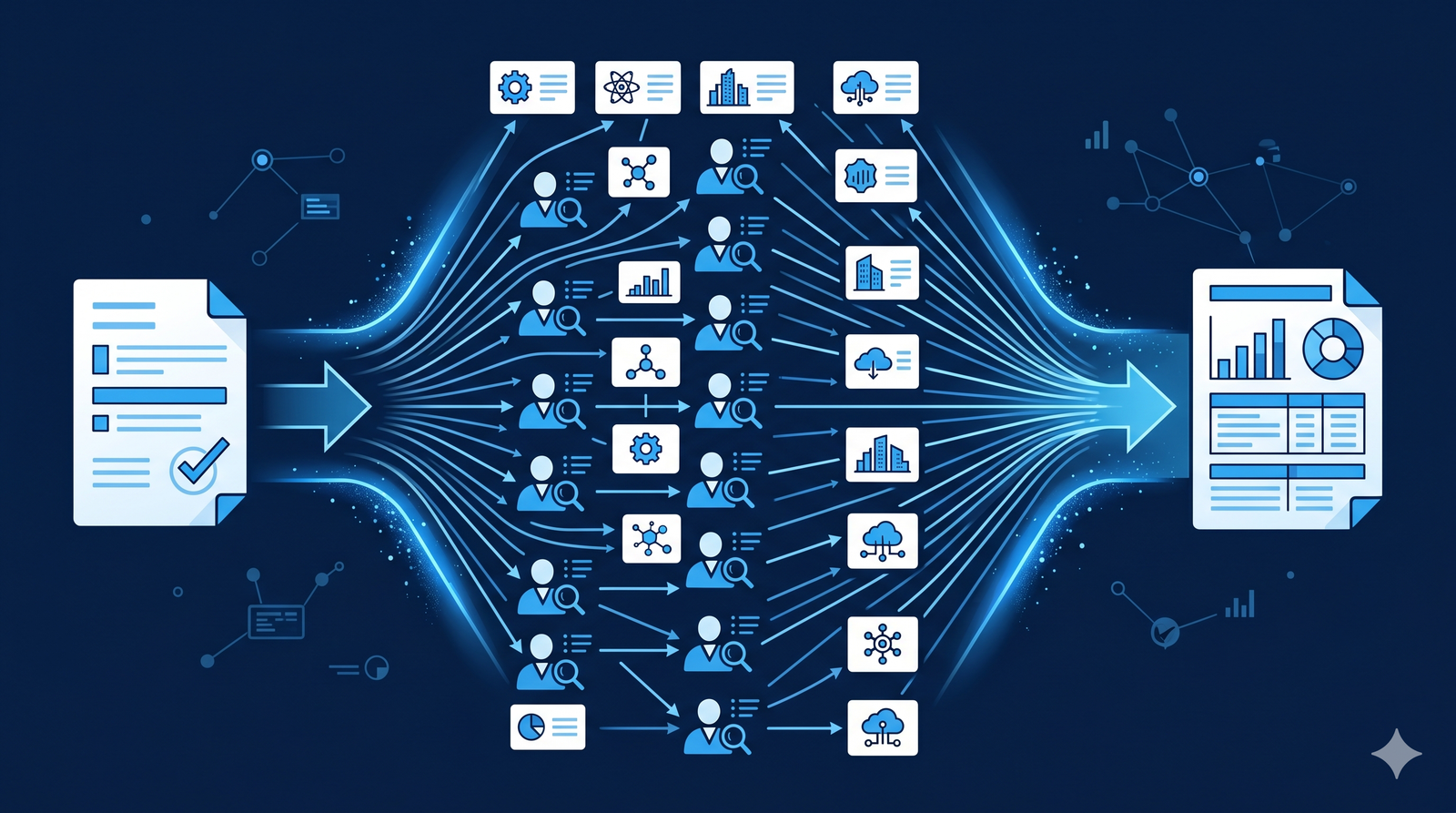

Instead of one agent analyzing all 20 companies sequentially, the system deploys independent agents in parallel, one per company. Each agent has its own fresh context window dedicated entirely to researching its assigned company.

Agent 1 researches Company A. Agent 2 researches Company B. All 20 agents work simultaneously. Each agent browses the company's website, checks pricing pages, reads recent news, and gathers the specific data points defined in the research brief.

This is the critical difference from traditional AI chat. Agent 20 gets the same quality of attention as Agent 1 because each agent operates independently with a clean context.

Step 3: Data Gathering

Each agent uses real-time tools to gather current information. Web browsing to access company websites, product pages, and pricing pages. Search to find recent news, funding announcements, and product updates. Document reading if you provide existing reports or data files as inputs.

Because the agents browse the live web, the research reflects current information, not data from the model's training cutoff. Pricing that changed last week shows up correctly. A product feature launched yesterday gets included.

Step 4: Synthesis and Output

A coordinator agent collects results from all 20 research agents and assembles them into the requested format. If you asked for a comparison table, you get a structured table with consistent data points across all 20 companies. If you asked for a written report, you get narrative analysis organized by theme or by company.

The coordinator also identifies patterns across the dataset: common pricing models, feature gaps, market positioning clusters, and competitive dynamics that only become visible when you analyze the full landscape together.

Step 5: Your Expert Review

The output is a first draft, not a final deliverable. Your role is to review the research for accuracy, add strategic context that the AI does not have (your client's specific priorities, organizational constraints, relationships with vendors), correct any errors, and refine the analysis with your professional judgment.

This review typically takes 15 to 30 minutes. The total workflow, from defining the brief to delivering the final analysis, takes 30 to 60 minutes instead of four to six hours.

Practical Examples

Example 1: Vendor Evaluation

A fractional CTO needs to evaluate 15 cloud infrastructure providers for a client migrating from on-premises data centers. The evaluation criteria include pricing models, compliance certifications, geographic availability, support tiers, and migration assistance.

Without agents: 8 hours of manual research, visiting 15 websites, comparing pricing calculators, checking compliance documentation, and building a comparison matrix.

With agents: Define the evaluation criteria. Deploy 15 agents in parallel. Each agent researches one provider, gathering pricing details, compliance certifications, data center locations, and support information. The coordinator compiles a comparison matrix and written summary. Total time: 25 minutes plus 20 minutes of expert review.

Example 2: Market Landscape Report

A strategy consultant needs a comprehensive overview of the AI-powered customer support market for a client considering entering the space. The report should cover the top 30 companies, their market positioning, pricing, key customers, and technology differentiation.

Without agents: 12 to 16 hours of research across 30 companies, synthesized into a 20-page report.

With agents: Define the research framework. Deploy 30 agents in parallel, each researching one company. The coordinator produces a structured report organized by market segment, with comparison tables and competitive positioning analysis. Total time: 30 minutes plus 45 minutes of expert review and strategic commentary.

Example 3: Weekly Competitive Monitoring

A product team wants to track 10 competitors on a weekly basis, monitoring for pricing changes, feature launches, hiring patterns, and press mentions.

With automated agents: Set up a recurring weekly agent that monitors the 10 competitors. Every Monday, the agent checks each competitor's website, searches for recent news, reviews their job postings, and compiles a summary of changes. The output arrives in your workspace ready for review before your Monday standup.

This replaces what would otherwise be 2 to 3 hours of manual monitoring every week. Over a year, that is 100 to 150 hours of analyst time reclaimed.

Limitations and Honest Caveats

AI agents are powerful for research, but they have real limitations you should understand.

Verification is still necessary. Agents can access websites and search results, but they can occasionally misread pages, miss context, or report outdated cached information. Always verify critical data points, especially pricing and feature claims, against the actual source.

Gated content is not accessible. Agents cannot log into platforms, access paywalled content, or retrieve information that requires authentication. If a competitor's pricing is only available through a sales call, the agent will report "pricing not publicly available" rather than inventing a number.

Qualitative judgment is limited. An agent can tell you what features a company offers. It cannot tell you how it feels to use their product, whether their support team is responsive, or whether their enterprise sales process is painful. Qualitative insights still require human experience.

Complex analysis requires expert framing. The quality of agent research depends directly on the quality of your brief. A vague request produces a vague output. The most effective researchers spend 5 to 10 minutes defining a specific, structured brief that guides the agents precisely.

Tips for Getting the Best Results

Be specific about data points. Instead of "research this company," specify "find their pricing tiers, enterprise features, API documentation quality, customer reviews on G2, and any news from the last 90 days."

Use parallel processing for breadth. The biggest advantage of agents over chat-based AI is parallel execution. Use it for tasks that involve many items: 20 companies, 50 products, 100 research papers.

Save and reuse your best briefs. When you write a research brief that produces excellent results, save it as a template. Shared prompt libraries make your best briefs available to your entire team.

Combine with internal data. The most valuable research combines public web data with your internal information. Connect your CRM, documents, and databases to give agents context about your existing relationships and priorities.

Automate recurring research. If you do the same type of research regularly (weekly competitive monitoring, monthly market updates), set it up as an automated recurring workflow instead of running it manually each time.

The Bottom Line

Market research is one of the clearest use cases for AI agents. The work is important but time-consuming. It involves processing many independent sources. Quality degrades when done manually under time pressure. And the output follows consistent patterns that agents can produce reliably.

The shift from manual research to agent-based research does not replace the researcher. It changes what the researcher spends their time on. Less time gathering data. More time analyzing it. Less time formatting tables. More time providing strategic insight.

For consultants, fractional executives, product teams, and strategy professionals, this means more research gets done, at greater depth, in less time. That is not a marginal improvement. It is a fundamental change in what is possible within a workday.